Airflow dag tutorial12/1/2023

from datetime import datetime, timedeltaĬreate an instance of the DAG by passing the DAG name and default arguments. Set the default arguments for the DAG, including the start date and other parameters. from airflow import DAGįrom _operator import PythonOperator Start by importing the necessary modules from the Airflow library. You can customize the DAG further with additional tasks, operators, and parameters to suit your requirements. The below workflow will execute the send_welcome_message function, printing “Welcome to Intellipaat” to the log. The main aim of this workflow is to send “Welcome to Intellipaat” to the log. Let us take an example where we will create a workflow. Once Airflow is up and running, its executor becomes active within its scheduler.Ĭreating and declaring an airflow DAG is quite simple. Executor: This component is what actually gets things done.Though other options such as MySQL, MsSQL or SQLite could also work just as well. It acts like an electronic filing cabinet. Database: Your workflow and task information is stored here.Essentially, this role includes planning which tasks need to be completed when and where. Scheduler: A scheduler acts like an intelligent manager.It allows interaction and monitoring of workflows. It serves a User Interface (UI) built with Flask. Webserver: Airflow’s web-based control panel makes use of Gunicorn.Some of the core components of Apache airflow are listed below: Apache Airflow’s components work together seamlessly to help manage tasks and workflows efficiently. Take your career to the next height, enroll in Data Science Course!Īpache Airflow relies on several key components that operate continuously to keep its system functional. As per the defined schedule of the DAG, they can be manually triggered. The structuring possibilities are diverse.įurthermore, an occurrence of a DAG in action on a specific date is termed a “DAG run.” These DAG runs can be initiated by the Airflow scheduler. Whether they encompass a solitary task or an extensive arrangement of thousands. These DAGs make use of the advantageous characteristics of DAG structures for constructing effective data pipelines.Īirflow’s DAGs provide the flexibility to be defined according to specific requirements. They are arranged to exhibit task relationships through the Airflow UI. Each DAG represents a set of tasks intended for execution.

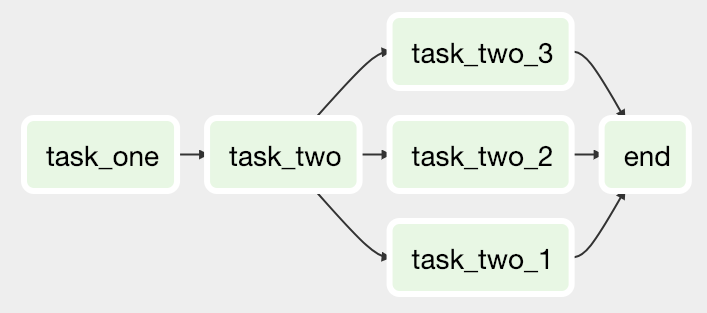

It illustrates task relationships.Īirflow DAGs refer to a Python-coded data pipeline. Graph: All tasks are visualizable as nodes and vertices within a graphical framework. This ensures the prevention of infinite loops. Let’s understand the meaning of DAG in an elaborative way:ĭirected: When dealing with multiple tasks, it’s crucial for each task to be linked to at least one preceding or succeeding task.Īcyclic: Tasks are prevented from depending on themselves. It is specially customized for Machine Learning Operations (MLOps) and other data-related tasks. It is an open-source platform for putting together and scheduling complex data workflows. An Airflow DAG, short for Directed Acyclic Graph, is a helpful tool that lets you organize and schedule complicated tasks with data.

0 Comments

Leave a Reply.AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed